Why WikiGuard?

Understanding the critical importance of Wikipedia monitoring for brand reputation and competitive intelligence.

What we don't do

WikiGuard is a monitoring tool. We don't edit Wikipedia pages, and we don't help manipulate articles. We provide alerts, diffs, and history so your team can respond appropriately.

Workflow for PR Teams & Agencies

- Create a client and set its Slack/Discord destinations.

- Assign monitored pages to that client.

- Get alerts routed to the correct client channel the moment an edit happens.

- Review the diff and decide the right response for your organization.

Client routing prevents cross-client mistakes. Unassigned pages still use global email and browser notifications, while Slack/Discord alerts are sent only for pages assigned to a client.

Overwatch routes Slack/Discord alerts per client to prevent cross-client mistakes.

The Seigenthaler Incident

A journalist falsely linked to the JFK assassination — for 132 days.

On May 26, 2005, an anonymous Wikipedia editor created a biographical entry about John Seigenthaler — a veteran journalist, former administrative assistant to Attorney General Robert F. Kennedy, and the founding editorial director of USA Today. The entry contained a fabricated claim:

"John Seigenthaler Sr. was the assistant to Attorney General Robert Kennedy in the early 1960's. For a brief time, he was thought to have been directly involved in the Kennedy assassinations of both John, and his brother, Bobby. Nothing combating this was ever proven."

The false accusation sat on Wikipedia — completely undetected — for 132 days. During that time it was indexed by Google, mirrored by Reference.com, Answers.com, and other sites that scraped Wikipedia content. A friend of Seigenthaler's stumbled upon the entry in September 2005 and alerted him.

The fallout was significant:

- Seigenthaler wrote an op-ed in USA Today titled "A false Wikipedia biography," calling it "Internet character assassination"

- The perpetrator, Brian Chase — a 38-year-old operations manager in Nashville — admitted it was "a joke that went horribly, horribly wrong" and resigned from his job

- The incident forced Wikipedia to restrict article creation by unregistered users and introduce the Biographies of Living Persons (BLP) policy

- It was covered by The New York Times, The Guardian, CNN, and dozens of international outlets

CongressEdits: When Politicians Got Caught

How a Twitter bot exposed U.S. congressional staffers secretly editing Wikipedia.

In January 2006, journalists discovered that congressional staffers were routinely editing their bosses' Wikipedia pages from government IP addresses. The edits weren't subtle:

- Rep. Marty Meehan's office replaced his entire biography with staff-written content, deleting references to broken campaign promises

- Sen. Joe Biden's staff removed mentions of plagiarism allegations from his page

- Rep. Gil Gutknecht's office used the account "Gutknecht01" to swap unfavorable content with flattering material from his official biography

Wikipedia investigators found over 1,000 edits from House IP addresses in just six months. But the story didn't end there.

In 2014, developer Ed Summers created @CongressEdits — a Twitter bot that automatically tweeted every time a Wikipedia edit was made from a congressional IP address. The bot immediately exposed absurd edits: conspiracy theories about moon landings, claims that Donald Rumsfeld was an "alien wizard," and references to reptilians. Wikipedia administrators temporarily banned the congressional IP address for "disruptive editing."

Corporate Wiki-Washing

When billion-dollar companies got caught editing their own Wikipedia pages.

In 2007, Virgil Griffith — a Caltech graduate student — launched WikiScanner, a tool that cross-referenced anonymous Wikipedia edits with known corporate and government IP addresses. The results made headlines worldwide:

- Coca-Cola: Computers at Coca-Cola changed the description of Dasani water from "bottled" to "spring water" — a significant misrepresentation, since Dasani is purified tap water

- PepsiCo: Employees deleted discussions about controversial imagery in their Aunt Jemima brand

- Glencore & Dow Chemical: References to alleged corrupt dealings and industrial disasters were repeatedly removed from their entries by editors working from company premises

- Church of Scientology: In 2009, Wikipedia banned all IP addresses owned by the Church of Scientology after WikiScanner revealed systematic removal of criticism from Scientology-related articles

More recently in 2019, The North Face and ad agency Leo Burnett launched a campaign where they replaced photos on Wikipedia pages for famous outdoor destinations (El Chalten, Guarita State Park) with branded images of people wearing North Face gear — specifically to manipulate Google Image search results. The Wikimedia Foundation publicly condemned the scheme as "unethical," and North Face was forced to issue a public apology.

The Underlying Problem

Wikipedia occupies a unique position in the information ecosystem. It's simultaneously:

- The most cited source on the internet — Journalists, researchers, and everyday people reference it constantly

- Completely editable by anyone — No credentials required, no approval process for most edits

- Treated as authoritative by search engines — Google often pulls Wikipedia content directly into search results

This creates a dangerous asymmetry. Your Wikipedia page has enormous influence over your reputation, but you have almost no control over what it says—and often no visibility into when it changes.

Traditional approaches to monitoring are inadequate:

| Approach | Problem |

|---|---|

| Manual checking | Unsustainable, changes slip through |

| Wikipedia watchlist | Requires Wikipedia account, easy to forget |

| Google Alerts | Only catches major changes, delayed |

| PR agency monitoring | Expensive, often not real-time |

How WikiGuard Works

WikiGuard provides automated, real-time monitoring of Wikipedia pages with features designed specifically for reputation management and competitive intelligence.

1. Real-Time Detection — Every Edit, Every Second

Every WikiGuard plan includes real-time monitoring powered by Wikipedia's official EventStreams feed — a live stream of every edit across all of Wikipedia. The moment someone changes a page you're watching, we detect it within seconds. No polling delays, no rate limits, no blind spots. As an additional safety net, our scheduler polls your pages at regular intervals (as frequently as every 30 minutes for Overwatch subscribers) to catch anything the stream might miss during brief interruptions.

Add a Wikipedia page and start monitoring it in seconds.

Import a Wikipedia watchlist in seconds and start monitoring pages in bulk.

2. Intelligent Diff Analysis

Unlike simple "something changed" alerts, WikiGuard shows you exactly what changed. Our diff viewer highlights additions, deletions, and modifications at the word level, making it easy to assess the significance of any edit.

See exactly what changed with word-level diff highlighting.

3. Instant Email Alerts

The moment a change is detected, you receive a detailed email notification with the diff summary, editor information, and a direct link to the Wikipedia revision. Configure smart notification filters to ignore trivial edits and only alert on changes that matter.

Fine-tune your notification preferences to stay informed without the noise.

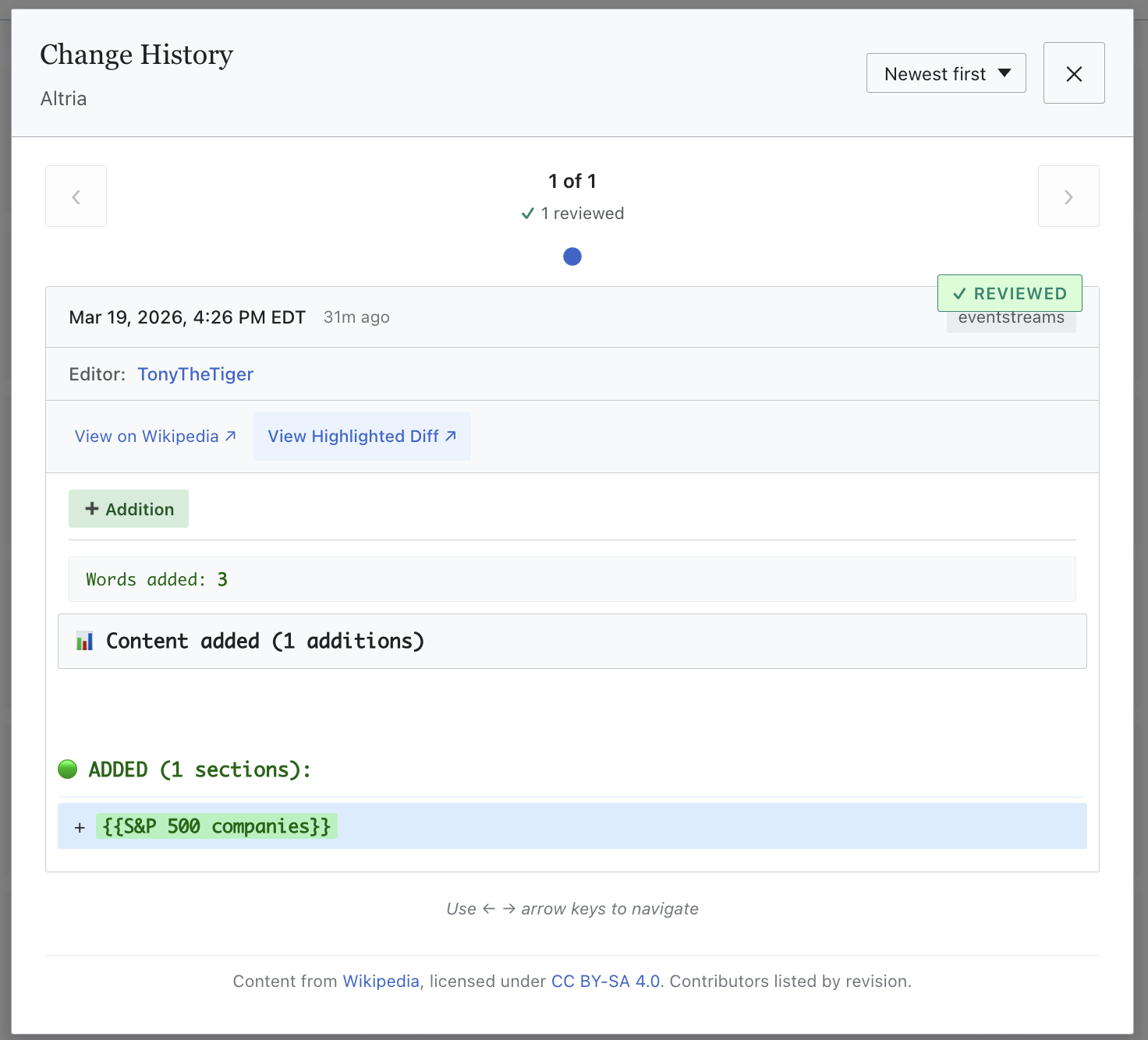

4. Complete History

Every detected change is logged with full context: timestamp, editor information, and the complete diff. Build a comprehensive record of how Wikipedia pages evolve over time.

Every change logged with full context — timestamp, editor, and the complete diff.

5. Team Routing (Overwatch)

On Overwatch, Slack and Discord alerts are configured per client group. Assign a page to a client, set that client's webhook, and alerts route to the correct team channel. Unassigned pages still use global email and browser notifications.

Route Slack and Discord alerts per client to prevent cross-client mistakes.

Ready to protect your reputation?

Start monitoring your Wikipedia pages today. Plans start at $10/month.

View Pricing →